I’m no doubt showing my age, but the phrase, “it’s not your father’s Oldsmobile” keeps popping up in my head each time I get a call to solve some type of modern-day Diagnostic Dilemma.

I’m no doubt showing my age, but the phrase, “it’s not your father’s Oldsmobile” keeps popping up in my head each time I get a call to solve some type of modern-day Diagnostic Dilemma.

The phrase was popularized by The Beatles drummer Ringo Starr in a series of television commercials designed to appeal to the youth market of the 1990s. Although the TV advertisement proved to be memorable, Oldsmobile succumbed to the Great Recession of 2009, as did the culture that grew around the brand.

This month’s Diagnostic Dilemma involves a pristine 1995 Buick Park Avenue with a customer complaint of dimming instrument cluster lights, a flickering voltmeter needle, a driveability bucking complaint and a dead battery. The alternator had been replaced due to an indication of excessive AC ripple on the shop’s hand-held battery and charging system tester. A P1630 trouble code was present, indicating a high/low condition with battery voltage.

Not that the dilemma itself was all that challenging, but the case illustrates that we are no longer diagnosing and repairing our father’s Oldsmobiles. The idea of a one-size-fits-all method of diagnosing charging systems no longer applies, even to a ’95 Buick Park Avenue. To better understand how modern charging systems work, let’s begin by understanding how the battery, which is the heart of the starting/charging system, is rated and tested.

Battery State of Charge

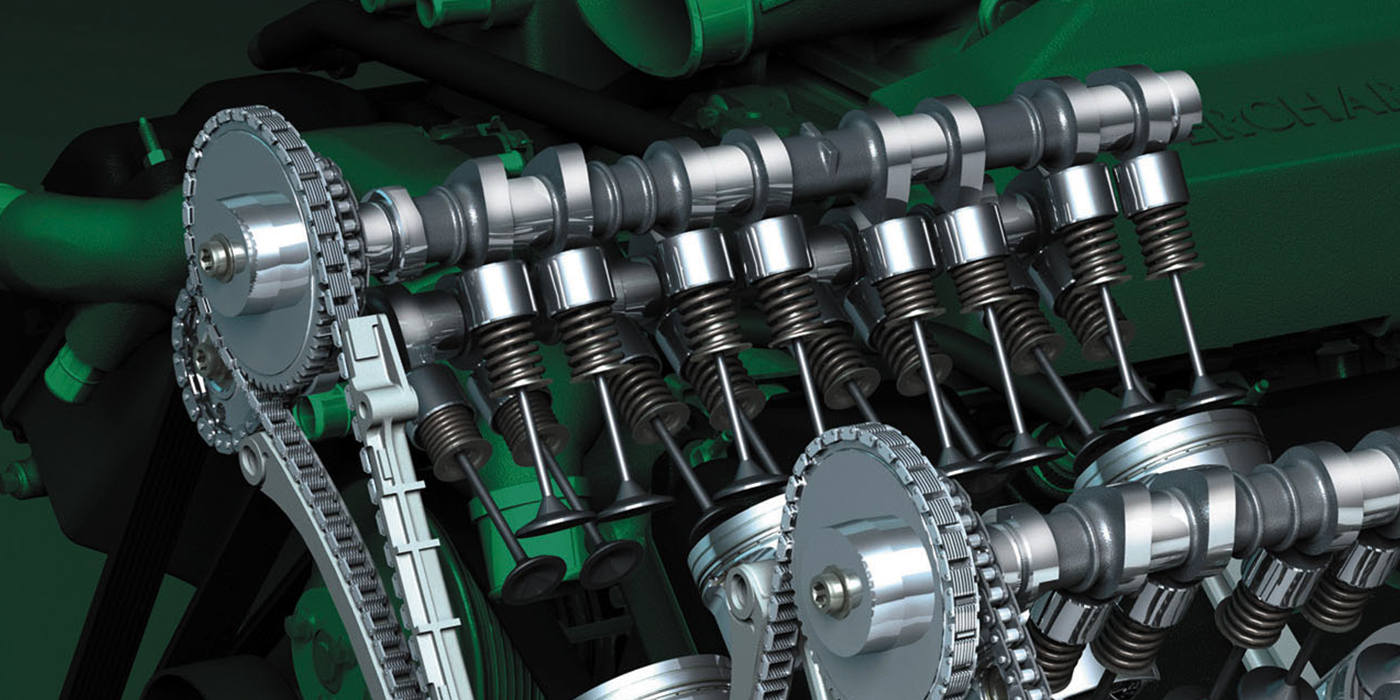

Because modern charging system strategies are based upon maintaining a battery’s state of charge (SOC), it’s important to understand that a modern 12-volt battery is made of six cells connected in series, with each cell producing 2.1 volts at full SOC. Each cell consists of positive and negative plates suspended in an electrolyte composed of sulfuric acid (H2SO4) mixed with water (H2O) having a specific gravity of approximately 1.270 times the weight of water at full charge.

When the battery is fully discharged, the battery plates absorb the chemical components of the sulfuric acid, leaving nearly pure water, which is why fully discharged batteries freeze in cold weather. For safety’s sake, if you suspect that a battery is frozen, allow it to reach room temperature before testing or recharging.

When testing batteries, remember that each cell is a miniature battery unto itself. Although the other five “mini-batteries” or cells might be in good condition, a single bad cell will reduce open-circuit voltage (OCV) and cold cranking amperage (CCA) at the terminals.

Open-circuit voltage is measured with a digital multimeter (DMM) attached to the battery terminals and with no electrical load on the battery or with the cables disconnected. A fully charged battery in good condition should produce 12.6 volts at the terminals.

If the OCV is higher than 12.6 volts, the battery likely contains a surface charge, which is the residual from charging voltage. While a surface charge will eventually dissipate, the traditional method for removing surface charge is to turn on the headlamps (or apply a 10.0-amp load) for five minutes. If the battery terminal voltage falls below 12.6 volts, a fully charged battery in good condition should recover to 12.6 volts at the terminals.

A bad cell will cause cranking voltage to fall well below the 10-volt threshold required for the on-board electronics to operate correctly. In addition, a bad cell will cause the charging system to work overtime trying to bring an obviously defective battery up to full SOC. While modern alternators are designed with very high amperage ratings, they are not designed to operate at continuously high current outputs.

Battery Ratings

Battery ratings are essential for testing battery condition and for understanding how the charging system works. Batteries are now rated in CCA, which is the battery’s maximum available cranking amperage at 0° F temperature when fully charged.

At 0° F, a battery produces only about 40% of its rated charge due to reduced chemical activity in the battery’s plates. Your father’s Oldsmobile required a CCA at least equal to the cubic-inch displacement of the engine. In modern times, the original equipment manufacturer (OEM) specifications for CCA are often well above that standard simply because modern vehicles can place much higher parasitic and accessory draw loads on the battery.

While not currently used to rate batteries, reserve capacity indicates how long a battery can deliver a 25-amp system draw at 80° ambient temperature without falling below 10.5 volts across the terminals. A typical reserve capacity is about 120 minutes, which indicates how long a vehicle’s ignition and fuel systems can operate on battery power alone.

Although amp-hour ratings are usually applied to deep-cycle batteries, the amp-hour rating helps us understand discharge rates. For example, a typical automotive starter battery can produce about 60 amp-hours of current, which means that the battery can discharge one ampere for 60 hours without dropping below 10.0 volts across the terminals. In terms of parasitic draw, a 60 amp-hour battery can supply 1/4th or 0.250 amps for 240 hours, which is approximately equal to a glove box lamp burning for 10 days before the battery is functionally discharged.

Although amp-hour ratings are usually applied to deep-cycle batteries, the amp-hour rating helps us understand discharge rates. For example, a typical automotive starter battery can produce about 60 amp-hours of current, which means that the battery can discharge one ampere for 60 hours without dropping below 10.0 volts across the terminals. In terms of parasitic draw, a 60 amp-hour battery can supply 1/4th or 0.250 amps for 240 hours, which is approximately equal to a glove box lamp burning for 10 days before the battery is functionally discharged.

Remember that deep-cycle batteries are built with thicker plates to better accommodate deeper, long-term discharge and recharging cycles. In contrast, starter batteries are built with thinner plates for quick discharge and recharging cycles. For that reason, starter batteries are easily ruined by repeated deep discharge/recharge cycles.

Battery Testing

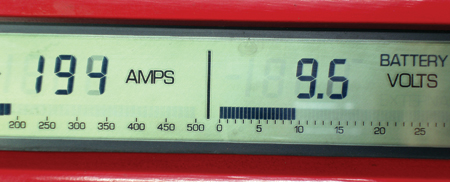

Since the battery is the foundation of the vehicle’s electrical system, it’s important to understand the various methods of testing battery charge and cell condition. Conductance testing is currently the most popular method of testing the battery charge and condition of the cells.

Although conductance testing isn’t an infallible battery test, it’s accurate at least 95% of the time. The advantage of conductance testing is that it’s not as reliant on the battery’s state of charge, which reduces the need to recharge the battery before testing.

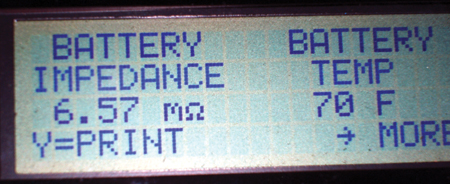

Due to its high discharge rate, carbon-pile load testing has generally fallen into disuse. However, the ability to load-test batteries and alternators can prove valuable in some cases. Since the battery must be loaded at one-half of its rated CCA for 15 seconds, the battery must be fully charged with its core temperature at 70° F.

If the battery voltage remains above 10 volts after being discharged for 15 seconds, it’s considered good. In some cases, a new battery with a bad cell can pass a carbon-pile load test because many new batteries actually produce about 125% of their rated CCA.

Battery hydrometers are used to test the specific gravity of the electrolyte. If a battery is fully charged, the specific gravity should be at least 1.250 times denser than water at room temperature. Older batteries will generally produce a lower specific gravity due to plate wear. If the battery is fully charged, the specific gravity shouldn’t vary more than 0.50 among the cells. While hydrometer testing is the most reliable method of testing cell condition, hydrometers can only be used on batteries with removable cell caps.

Battery Charging Modes

Although terminology and specifications might vary according to source, let’s take a brief look at how three different charging modes are required to establish a full SOC. The three different modes for recharging a lead-acid battery are bulk, absorption and float.

About 80% of the charging process takes place in the bulk mode, which is typified by high amperage flow and low terminal voltage. The bulk mode should last about five to eight hours, depending upon the original SOC, and will end when 14.4 volts is measured at the battery terminals.

The absorption mode is typified by lower amperage flow while maintaining 14.4 volts at the battery terminals. The absorption mode should last seven to 10 hours to chemically saturate the battery plates. The lack of an absorption mode will cause the battery plates to sulfate because the battery plates aren’t fully charged. The float mode is, of course, designed to maintain full saturation of the battery plates by maintaining 13.2 to 13.4 volts at less than 1 amp current flow at the battery terminals.

The problem we have in maintaining a full battery charge is that, because many vehicles are driven on short trips, the battery never enters the absorption mode. The battery will subsequently sulfate, which will reduce its capacity and SOC. While plate sulfation can occasionally be reversed by trickle-charging at 15.0 or more volts for 24 to 48 hours, sulfation is usually accompanied by the battery plates flaking away to the bottom of the case, which reduces battery capacity.

Although extended recharging times aren’t always possible in a modern shop, keep in mind that battery state-of-charge plays a large role in how a modern charging systems operates. When recharging a battery, remember that the charging rate is excessive when the battery feels hot to the touch or when the electrolyte begins to boil.

In addition, the integrity of the on-board electronics is endangered when charging voltage exceeds 17 volts. When recharging a battery, disconnect the battery for recharging or use a modern “smart” charger that limits charging voltage and operates in multiple charging modes.

Starting System Changes

When a battery was in good condition, the field-coil, direct-drive starter on your father’s carbureted Oldsmobile usually drew amperages equal to one-half of the cubic-inch displacement. Back in the day, the Oldsmobile’s venerable 455-cubic engine quickly killed a battery that didn’t measure up to OEM specifications, especially with the longer cranking times required for cold-weather starting.

In modern times, the permanent field magnet, reduction-gear starters used on current production vehicles draw much less amperage. Because computer-controlled ignition and fuel delivery systems fire the cylinders nearly instantaneously, batteries on the very threshold of complete failure can live a very long time. This is why many batteries unexpectedly fail in the parking lots of local shopping malls.

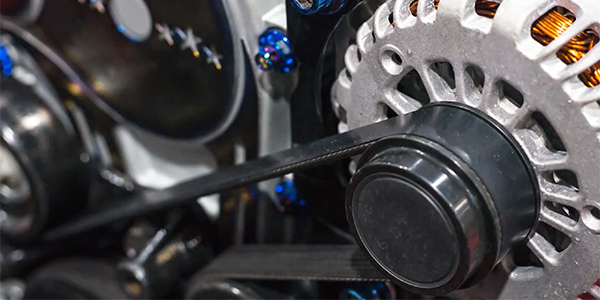

Charging System Changes

Modern charging systems generally base their charging strategies on the battery’s SOC. The Delco-Remy alternator on your father’s Oldsmobile maintained a constant 14.2 volts across the battery terminals at 70° F ambient temperature. At 0° F, the charging voltage rises toward 15.2 volts to compensate for slower chemical activity in the battery. At 100° F, the charging voltage is reduced to about 13.8 volts to prevent boiling the water from the battery’s electrolyte.

But times have changed. Veteran mechanics might recall Honda designing battery-charging strategies during the 1980s that would increase fuel economy. Honda used an electric load detect (ELD) module that communicated with the engine control module (ECM).

At idle speed, the ELD would de-activate the alternator until battery voltage dropped below a specified threshold or until the ELD detected an electrical load. It was easy to assume that the alternator wasn’t working because the alternator usually wouldn’t begin charging until the headlamps were turned on to apply an electrical load.

While I can’t go into detail in this space, suffice it to say that the charging modes of many modern charging systems are controlled by the PCM. While most modern computer-controlled charging systems take ambient temperature into consideration, they also might take other driving conditions, such as maximizing fuel economy, into consideration as well when calculating the alternator’s charging rate.

To illustrate, some General Motors vehicles monitor current flow from the battery by attaching an inductive ammeter to the battery cable to detect amperage flow to and from the battery. In any event, the charging mode is determined by programming in the PCM. Long story short, charging systems should no longer be tested by attaching a DMM and looking for 14.2 volts at the battery with the engine running.

Our Father’s Park Avenue

Going back to our case study, the battery would pass a conductance test, but was badly discharged. A P1630 trouble code indicated a high/low voltage condition in the system. The client shop had replaced the alternator because its testing equipment indicated excessive AC ripple that was likely caused by a defective alternator diode.

I’d like to emphasize that diagnosing a charging system against a badly discharged or defective battery often confuses the diagnostic process. To avoid downtime recharging and testing the vehicle battery, I substitute a known-good battery when testing a charging system.

In this case, no substitute was available. Nevertheless, a preliminary test indicated 14.2 charging volts at both the battery and alternator B+ terminal. A hand-held inductive ammeter indicated a 46.8-amp charging rate. Our immediate Diagnostic Dilemma was why the battery was discharging at 14.2 volts and 46.8 amp rates.

It’s always much better to check charging voltage by connecting a professional-level scan tool and, while we’re at it, check for diagnostic trouble codes. Some PCMs are capable of self-diagnosing the charging system while some merely indicate a high or low voltage condition. Other PCMs provide bi-directional control of the charging system.

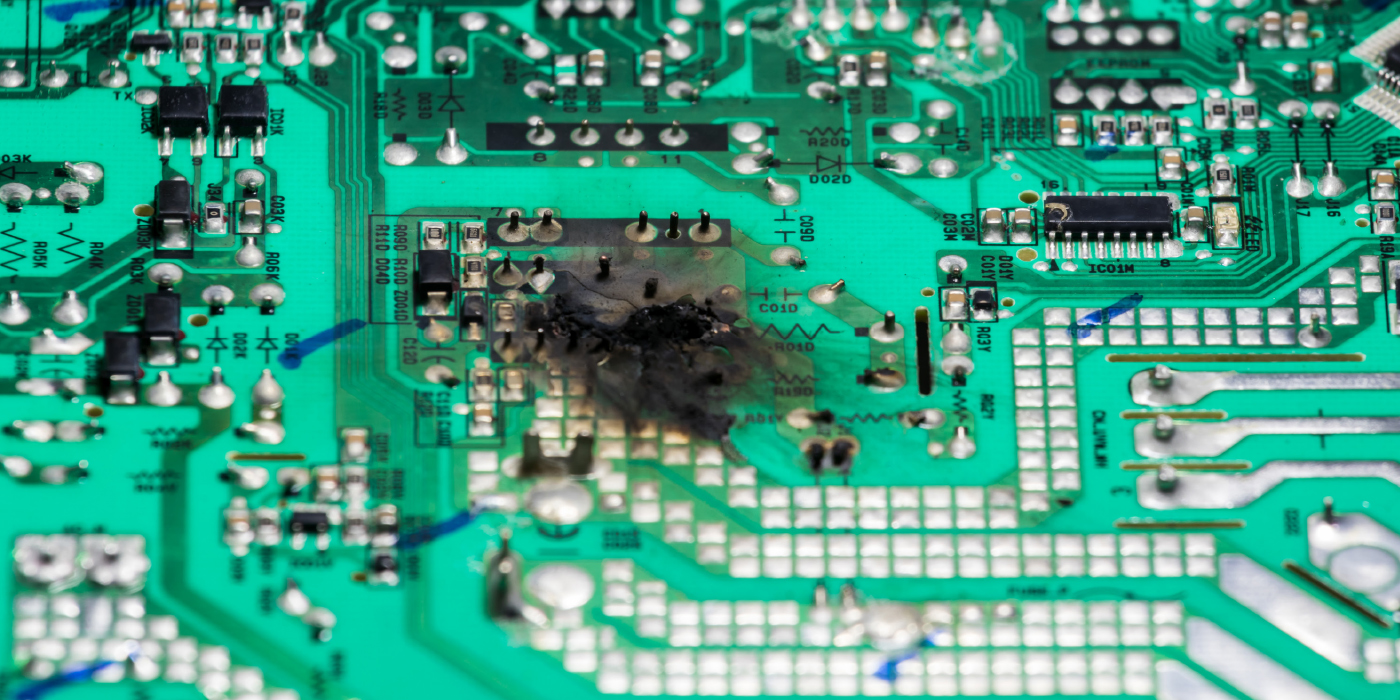

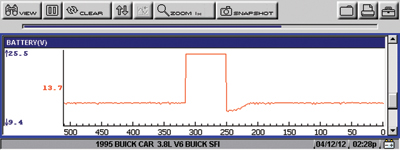

While it’s always best to consult a professional database before testing any charging system, the scan tool provided the key to solving the problem. By graphing the battery voltage through the PCM, we could observe an instant rise from 14.2 volts to over 25 volts. Because I don’t always trust data stream PIDs (parameter identification data), I connected my own scan tool, which verified that, indeed, the PCM was “seeing” 25 volts in the system while, in fact, the voltage had actually dropped to less than 13.7 volts at the alternator B+ terminal with the engine running.

After verifying ground connections and testing for other wiring problems, I concluded that the PCM

wasn’t reading system voltage correctly. If, indeed, the charging voltage was that high, we’d see the headlamps flare and the on-board electronics already failed.

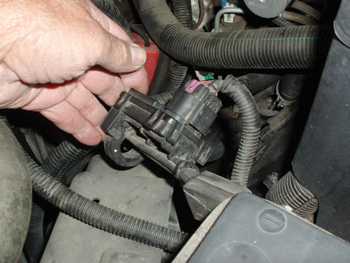

According to our local jobber, Buick Park Lanes had three different alternator options for 1995. I was surprised when I noticed that, instead of the conventional flat, two-wire regulator connector, this alternator had the more modern single-wire connector. The single wire suggested that the PCM controls the alternator.

Following that conclusion, I tested voltage at the single “L” wire and found that when the alternator was charging, the voltage varied from about 10.0 to 11.0 volts. When the alternator quit charging, the L-wire voltage dropped to zero. Although the L wire was probably producing a digital signal (I didn’t have my lab scope handy), it was obvious that the change in the wire’s voltage was directly related to the voltage PID in the scan tool’s data stream. In other words, the PCM deactivated the alternator when it “saw” 25 charging system volts.

The explanation of the original symptoms became very clear. The instrument lights were probably dimming because the battery was nearly dead when the PCM deactivated the alternator. Second, the PCM consistently deactivated the alternator when the scan tool data PID approached 25 volts. Last, a quick review of my diagnostic database revealed that the false voltage PID and related driveability complaints were consistent with a failed PCM.

After testing all powers and grounds to the PCM, the obvious solution to this Diagnostic Dilemma was to replace the PCM. The next day, after the battery was thoroughly charged and the remanufactured PCM was installed, the charging voltage hovered at 13.5-13.8 volts at room temperature.

Because most vehicles produced in 1995 were a combination of OBD I and OBD II technology, they were stand-alone systems that don’t always follow conventional diagnostic practice. While there’s a lot more to be said about charging system diagnosis, Diagnostic Dilemmas like the above example prove once again that we should never assume that a “one size fits all” theory of charging system diagnostics will work on our father’s Oldsmobile, let alone the cars of today and tomorrow.